Mojo Updates

Tom Haddon

on 12 January 2016

It’s been a while since we’ve talked about Mojo, and since then, there have been quite a few changes. I wanted to take the opportunity to highlight some of the recent updates to Mojo and then talk about our plans for future improvements.

Mojo what?

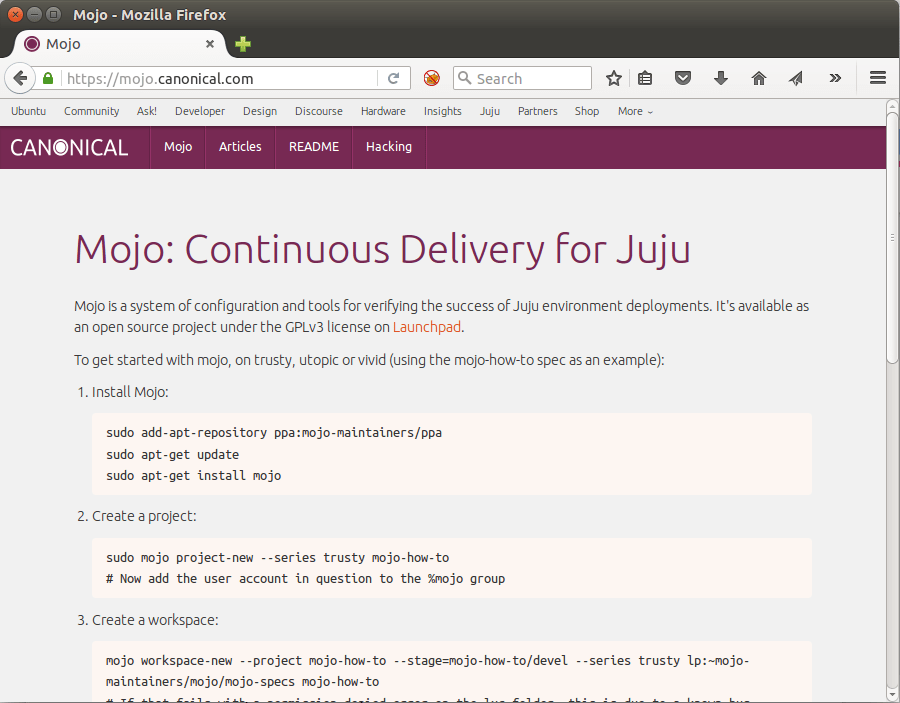

So what is Mojo anyway? It’s a tool for deploying, managing and testing Juju services. It allows you to define in a repository how you’d like your service to be composed, and then deploy it in a repeatable manner.

For more info see our previous article and the two follow up pieces (Part 2, Part 3), as well as the documentation available at https://mojo.canonical.com.

What’s new?

Simplification

We’re trying to simplify specifications as much as possible, so the “repo” and “secrets” phases are no longer needed in Mojo. You can still call them explicitly if you need to, but Mojo will automatically create a charm repository for you from charms you’ve assembled in a collect phase, and it will automatically pull in secrets for you at the appropriate time too.

In the interests of simplification, we’ve also created a “containerless” project type. If your service doesn’t need to run any build steps within LXC this means you no longer need to create one. You do so like this:

# With a container mojo project-new my-stack-name -s trusty -c lxc # Without a container mojo project-new my-containerless-stack -s trusty -c containerless

Note that we’ve also updated “mojo project-new” and “mojo project-destroy” to not require being called with sudo. This brings them inline with all other mojo commands, which will escalate privileges themselves if needed.

Transparency

A major goal of Mojo is transparency between those administering production services and developers working on them, so we added the $MOJO_LOGFILE environment variable. At Canonical we set that variable in production environments to a location that publishes to an internally visible web server, so that developers can see in real time the output of any Mojo commands run against their production service by SREs.

Speed

Some complex services have a lot of dependencies to collect. Recognising this, we’ve added a “concurrent” option to codetree, so that it will pull down resources as quickly as possible, while taking into account any dependencies based on the layout of the resources you’ve specified. So if you’ve specified a tarball that needs to live within another directory it will first collect the parent directory and then the tarball.

This concurrency is the default in Mojo, so you don’t need to do anything special to take advantage of it.

Git support in Codetree

Mojo was originally written with support for Bazaar, but as Launchpad has grown support for Git, we’ve added Git support to Codetree so that you can now use Git repositories in your collect phases.

Features Based on Specification Scripts

The final group of new features are things we’ve added based on functionality found or needed in many specifications.

The first of these relates to the “verify” phase. Sometimes when you bring up a new service you can get transient verify failures while (for instance) services start up, or start accepting connections. To accommodate this, we’ve added a “retry” and “sleep” option to the verify phase, so that you can now do this:

verify retry=3 sleep=10

This will rerun the verify step up to three times, sleeping for 10 seconds between each attempt.

The second is a new phase we’ve added called “stop-on”. It works like this:

stop-on return-code=99 config=check-for-changes

This will run the “check-for-changes” script, and if it exits with a return code of 99, will stop further execution of the current manifest without raising an error. If it exits with a return code of 0, further phases in the manifest will be run. Any other return code will be considered an error.

We added this because we wanted a way to be able to check in CI if there was new code to deploy before trying to doing so, without raising a fatal error.

The third is the ability to show and diff juju-deployer configurations within Mojo. These are sub-commands of the deploy phase, and take the same options. They can be used to preview what rendered juju-deployer configuration files would look like, or to diff what’s currently deployed to the environment versus what is in the Mojo specification. For example, looking at the mojo.canonical.com documentation site:

mojo deploy-show --options config=services local=services-secret

series: trusty

services:

apache2:

charm: apache2

expose: true

num_units: 1

options: {enable_modules: ssl, nagios_check_http_params: -I 127.0.0.1 -H mojo.canonical.com

-S -e '200' -s 'Mojo', servername: mojo.canonical.com, ssl_cert: 'include-base64:///srv/mojo/mojo-is-mojo-how-to/trusty/mojo-is-how-to/local/mojo.canonical.com.crt',

ssl_certlocation: mojo.canonical.com.crt, ssl_chain: 'include-base64:///srv/mojo/mojo-is-mojo-how-to/trusty/mojo-is-how-to/local/godaddy_issuing.crt',

ssl_chainlocation: godaddy_issuing.crt, ssl_key: 'include-base64:///srv/mojo/mojo-is-mojo-how-to/trusty/mojo-is-how-to/local/mojo.canonical.com.key',

ssl_keylocation: mojo.canonical.com.key, vhost_http_template: 'include-base64:///srv/mojo/mojo-is-mojo-how-to/trusty/mojo-is-how-to/spec/mojo-how-to/production/../configs/mojo-how-to-production-vhost-http.template',

vhost_https_template: 'include-base64:///srv/mojo/mojo-is-mojo-how-to/trusty/mojo-is-how-to/spec/mojo-how-to/production/../configs/mojo-how-to-production-vhost-https.template'}

content-fetcher:

charm: content-fetcher

options: {archive_location: 'file:///home/ubuntu/mojo.tar', dest_dir: /srv/mojo}

ksplice:

charm: ksplice

options: {accesskey: xxxREDACTEDxxx,

source: ''}

landscape:

charm: landscape-client

options: {account-name: standalone, ping-url: 'http://landscape.is.canonical.com/ping',

registration-key: xxxREDACTEDxxx, tags: 'juju-managed, prodstack-instance',

url: 'https://landscape.is.canonical.com/message-system'}

nrpe: {charm: nrpe-external-master}

This shows the actual deployment options that would be passed to juju-deployer if we were to run the deploy phase with “config=services local=services-secret”.

mojo deploy-diff --options config=services local=services-secret

2016-01-04 10:19:28 [INFO] Pulling secrets from /srv/mojo/LOCAL/mojo-is-mojo-how-to/mojo-how-to/production to /srv/mojo/mojo-is-mojo-how-to/trusty/mojo-is-how-to/local

services:

modified:

content-fetcher:

cfg-config:

archive_location: file:///home/ubuntu/mojo.tar

env-config:

archive_location: file:///home/ubuntu/mojo-241.tar

Here we see that the “services” deploy file specifies mojo.tar, but we’ve actually deployed mojo-241.tar to the environment. This is because any time there’s a new commit to the mojo source tree we update the environment accordingly.

The final new feature we’ve added is automatic checking of Juju status after each deploy phase. If the status check fails, Mojo will grab Juju logs from any failed unit for easier debugging, as well as printing a summary of Juju status. We’ve integrated lp:juju-wait as well so you can optionally use that to wait for steady state in your environment:

# default deploy phase will now check juju status as well deploy # optionally also run juju-wait deploy wait=True # you may also want to upgrade charms, for instance, and then check juju status # and wait for the environment to reach steady state script config=upgrade-charms juju-check-wait

What’s Next?

We’ll be continuing the theme of simplification particularly in upcoming changes to Mojo. As Mojo is used more widely within Canonical, we’ve seen many utility scripts being added to specification repositories to do things like manage floating IPs in OpenStack or run all nagios checks in an environment. We plan to integrate these and others directly into Mojo.

The addition of containerless projects has also laid the groundwork for adding support for userspace LXC, which is sorely needed in Mojo. We plan to address this soon.

We’ll be publicising changes to Mojo more frequently, as it’s nearly a year since our last update, and a lot has changed since then. Also, we’re planning to upload Mojo to Debian and Ubuntu rather than just providing it through a PPA on Launchpad.

Try it for yourself by following the quick start guide on https://mojo.canonical.com/, and please let us know what you’d like to see in Mojo via bug reports here.

Talk to us today

Interested in running Ubuntu in your organisation?

Newsletter signup

Related posts

AppArmor vulnerability fixes available

Qualys discovered several vulnerabilities in the AppArmor code of the Linux kernel. These are being referred to as CrackArmor, while CVE IDs have not been...

The bare metal problem in AI Factories

As AI platforms grow into large-scale “AI Factories,” the real bottleneck shifts from model design to operational complexity. With expensive GPU accelerators,...

Fast-tracking industrial and AI deployment on Renesas RZ platforms

Certified Ubuntu 24.04 LTS images now available Canonical is pleased to announce the general availability (GA) of certified Ubuntu 24.04 LTS and Ubuntu Core...